RDM Beta AI Assistant?

are any docs available yet for configuring the Ai Assistant RDM entry, I am currently attempting to use an Azure Open Ai provider but have been unable to get a response other than errors, my first question is the providers, I see in the drop down several GPT options but the field does take text, I've been attempting to use the model name as stated on azure 'DeepSeek-R1', I have also tried using various endpoint URLs and keys found on AI Foundry but none have worked yet, I will try a model listed in the RDM provider dropdown next but I assumed it would work with any Model / Provider is this not the case? Since AI Foundry also provides multiple endpoint URLs I am left to trial and error.

Any guidance would be appreciated for Azure Open AI.

Thanks

JK

Devolutions Force Member (and Long time Devolutions Fan)

All Comments (18)

Hello John,

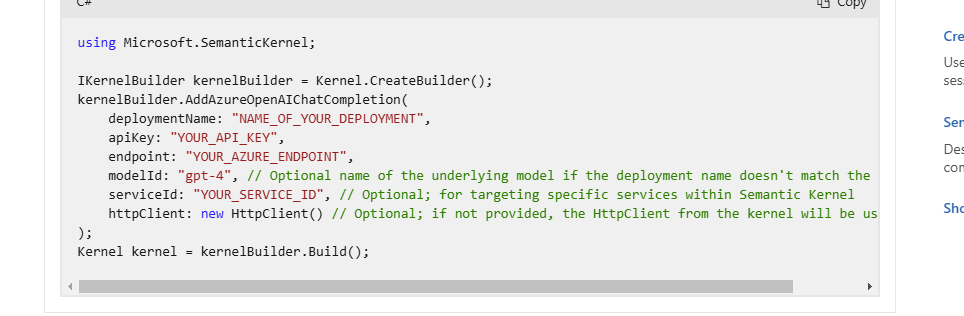

I'm the one that did the integration. Our integration use the Semantic Kernel:

https://learn.microsoft.com/en-us/semantic-kernel/concepts/ai-services/chat-completion/?tabs=csharp-AzureOpenAI%2Cpython-AzureOpenAI%2Cjava-AzureOpenAI&pivots=programming-language-csharp

I'm not sure what could be missing. Are you sure that the model you entered is deployed on your Azure resource?

Regards

David Hervieux

be596b14-3498-4f61-b4d0-358a47ad66ab.png

Ive just managed to get the GPT-4O model to run using the endpoint containing openai, then once I set the token count to 4096 it worked.

But cant say the same with DeepSeek-R1 model yet using the same steps I got working for the above model??

Ill look at the link you provided, I really want to be able to use custom models created....

JK

Devolutions Force Member (and Long time Devolutions Fan)

Hello,

The list provided is only to simplify the configuration. It's not linked to anything. For example, I could add DeepSeek-R1 to the choices, so I suspect that something is missing in your Azure, or maybe it's not the model name in the Azure catalog. I will ask my team to deploy it for me and test it if you can't get it working.

Regards

David Hervieux

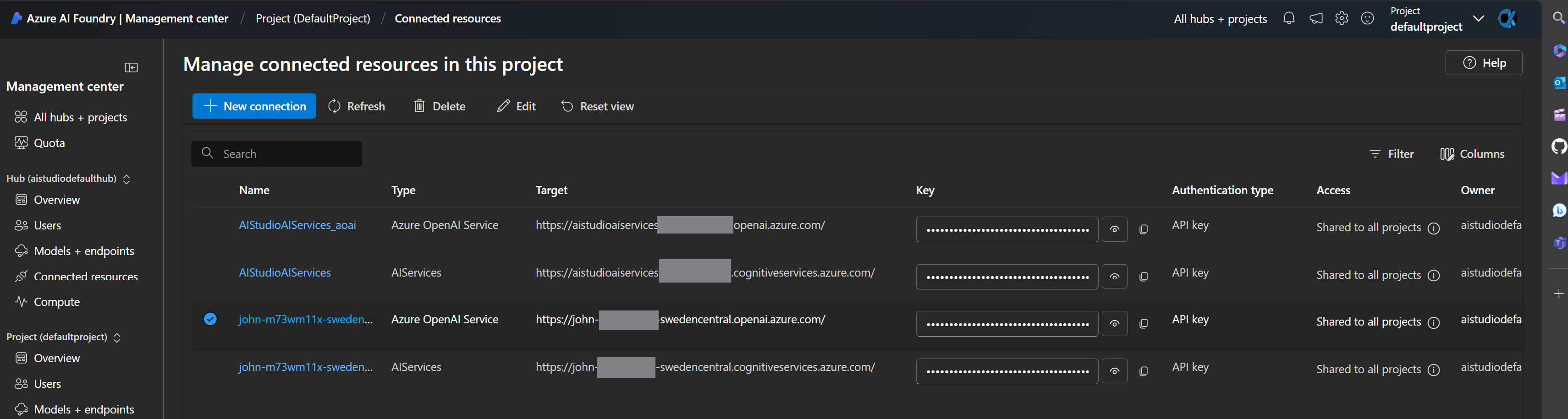

Bottom 2 are GPT40 which work, top 2 are deepseek-R1 which done for somereason? Should any model work?

JK

Devolutions Force Member (and Long time Devolutions Fan)

c1e7b69e-b0dd-464d-8b79-dd5421d7491f.png

I may retry deploying the Deepseek-R1 model as the one I deployed came from Github.com Marketplace, perhaps theres something incompatible, watch this space.

These Ai Assistant Entries, what functionality do they have other than just a chat??

JK

Devolutions Force Member (and Long time Devolutions Fan)

Hello,

You can use it to store your AI keys and test it. If you use the integration in File->Options, you can use it to proofread, summarize, and translate text in many places inside RDM, including the comment prompt or entry description. You can also use it to generate or improve your PowerShell script. I'm open to more ideas if you have some. The next big step is to automate RDM in natural language, but we are not there yet, like "Close all my RDP sessions".

Regards

David Hervieux

Hello,

You can use it to store your AI keys and test it. If you use the integration in File->Options, you can use it to proofread, summarize, and translate text in many places inside RDM, including the comment prompt or entry description. You can also use it to generate or improve your PowerShell script. I'm open to more ideas if you have some. The next big step is to automate RDM in natural language, but we are not there yet, like "Close all my RDP sessions".

Regards

Did wonder what was different with that option to entries

JK

Devolutions Force Member (and Long time Devolutions Fan)

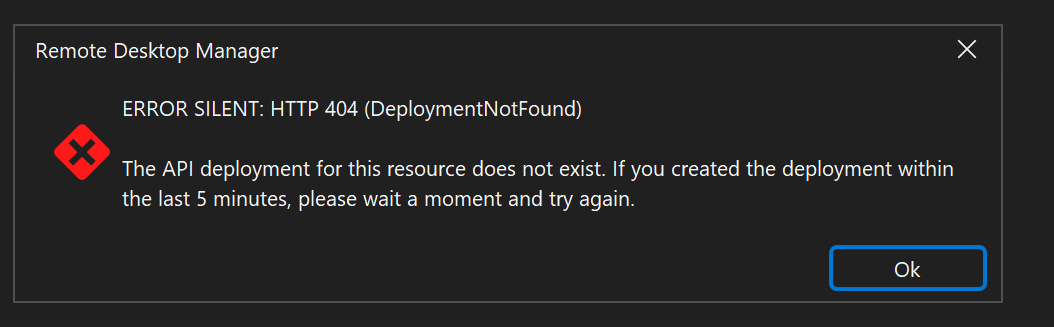

def cant get models not listed on the drop down working for some reason? my Deepseek-R1 model is on the same project as GPT-4o so uses the same endpoint and key with only the provider or model being different of which im copying and pasting from azure???

I am not quite at your method you mentioned, im just using AI Foundry hub and projects which Ive been playing with for some time, also the deepseek model runs find in the playground with the endpoint and key im using in RDM, where would I find advanced logs for my Ai Assistant entries? I've tried enabling the debug log settings but im only seeing the open and closing logs with the http error codes 404  I cant see what exactly it cant find in the logs I can find, can you point me in the right direction to get some verbose debug logs for this??

I cant see what exactly it cant find in the logs I can find, can you point me in the right direction to get some verbose debug logs for this??

Im about to start from here Add chat completion services to Semantic Kernel | Microsoft Learn and see how I get on, I should be able to get by using python

JK

Devolutions Force Member (and Long time Devolutions Fan)

96b0b892-ed15-48f5-a5a2-3d58c4b75634.png

Hello,

We have been able to reproduce the issue and we will open a ticket with Microsoft.

Regards

David Hervieux

Hello,

I've been able to make it work, but we need a new provider named Azure Inference AI. This will be included in the beta soon.

Regards

David Hervieux

Hello,

I've been able to make it work, but we need a new provider named Azure Inference AI. This will be included in the beta soon.

Regards

Ok soon as I see the beta update ill try and let you know how it goes.

JK

Devolutions Force Member (and Long time Devolutions Fan)

FYI the Azure inference works great, opens up many more models. I'd love top know what the roadmap for AI looks like, as mentioned in your blog, I really hope some sort of tooling use can be implemented at a later stage, I've been toying with various MCP' servers online as low cost as possible. I went this route as it simplified being able to use tooling with my LLM's and RAG's but to code tooling from scratch was still beyond me, so it lead me to MCP which have many open source solutions.

I don't expect to use AI to completely integrated I just hope that finding an MCP server withy required preset tools could possibly utilise various artifacts but only the free mcp apps were very useful

Anyway, I really cant wait to see what magic you code next!!

JK

Devolutions Force Member (and Long time Devolutions Fan)

Im wondering, can we expect to see functions for the AI assistant eventually, such as calling additional entries, or even generating them.

Just assumptions after seeing rolling out additions...

Does sound cool though,

JK

Devolutions Force Member (and Long time Devolutions Fan)

Hello,

Indeed, this is something that we want to explore. I'm not sure if this will be in the next major release, but perhaps by the end of the year.

Regards

David Hervieux

All good, Ive found that when It comes to Ai stuff I have to step back and not go too far to quickly in my cases or I end up getting lost in the world of possibilities lol, poxy IT magpie syndrome is hard lol

JK

Devolutions Force Member (and Long time Devolutions Fan)

Hi David, hows things??

Sorry another query, but first I've started to get used to using and discovering RDM's Ai Assistant it's great, I also wondered if the default AI Assistant is using a custom trained model, or its just off the shelf?? Well back to my main topic for this post, does the RDM roadmap specifically related to AI Assistant have plans to integrate RAG in this case I see Semantic Kernel Vector Stores described but I have no idea if there would be possibilities for instances to use their own local Vector storage which talks with the default AI Assistant model etc? now I realise users how are using their own custom models and specifically Azure AI inference APIs in my case this is already doable, my query relates to users' instances how can't use custom models with AI Assistant. Additionally, I'm only thinking RAG for previous chat history memory which although wouldn't be easy to implement, it must be easier than using RAG for RDM instance entries context, although bpth would be in an ideal world???

Sorry to ramble on again, just airing my thoughts that I think of while working with RDM etc......

Thanks.

JK

Devolutions Force Member (and Long time Devolutions Fan)

Hello,

I'm not focusing a lot on AI for 2025.2 but this is definitively something I want to evaluation. Thank you for all you suggestion.

Regards

David Hervieux

My thoughts for RAG were that you could train the default model your using or add to Azure AI Search as context data, all your documentation, knowledgebase, academy etc, that would be ideal

JK

Devolutions Force Member (and Long time Devolutions Fan)